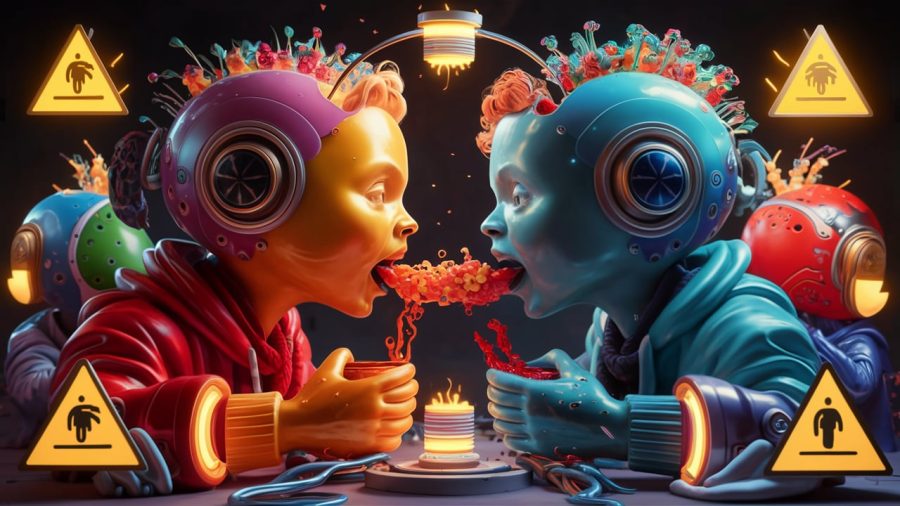

Cannibalizing generative AI models could ‘go mad’ over time

Generative AI models that just feed off one another could end up ‘going mad’ over time, affecting the quality of the output.

A new study from researchers from Rice University and Stanford University has highlighted how the quality of generative AI could suffer a decline if AI engines are trained on machine-made input rather than that from humans. In essence, if AI models learn from one another in a cannibalizing fashion, it could affect the long-term quality of the systems.

How can generative AI models go ‘mad’?